Introduction

This blog post discusses tactical workloads on a Teradata system. Despite Teradata’s implementation of features that support tactical workloads, this workload category remains challenging to manage. Selecting an optimal physical design is essential to meet user expectations for query speed.

Designing the Teradata tactical workload on a test environment can be frustrating, especially when it fails to meet user expectations on the production system.

I’ve observed that applications executing tactical queries often don’t satisfy users’ expectations. This is because they’re built with the same approach as a data warehouse designed for strategic workloads.

Consider a web application that enables users to access information about individual customers. This task pertains to the traditional OLTP model and may not be a fitting workload for a data warehouse system. Typically, users will anticipate the ability to navigate through a customer’s data promptly. Performance is critical to maintaining usability.

To ensure user satisfaction, it is crucial to attain consistent query run times. Inconsistencies, where a query may take 2 seconds at times and several minutes at others, are unacceptable. Unfortunately, I have frequently encountered this issue due to designers’ oversight of tactical workload specifics during physical database design. Typically, applications are developed on unloaded test systems, leading to surprises when competing with the production workload upon deployment.

The Cause of Performance Problems for Tactical Queries

Teradata is a database system optimized for parallel processing and efficiently reading large data sets. However, for tactical workloads, the focus is on optimizing access to individual rows on disk for efficient reading and writing.

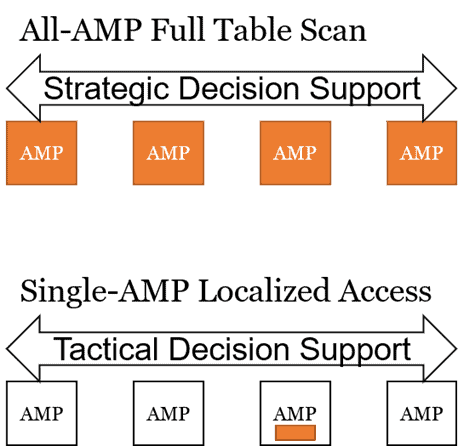

Full table scans hinder tactical queries in Teradata, as their runtime is dependent on the overall load of the system. While enhancing runtimes by enlarging the system is possible, such a course of action is often not practical. Even with system expansion, Teradata’s query results may be slightly faster, but runtime remains unreliable. Sometimes, organizations purchase a dedicated Teradata system solely for their isolated tactical application. However, this solution is frequently unattainable for various reasons. This leads to the question: why restrict oneself to a Teradata system when a specialized system for tactical workloads can be utilized instead?

The aim of tactical query tuning is to reduce the required IOs to access a single or a few rows on a single AMP. Ideally, the query execution plan should localize the work to only one AMP and require just one data block transfer from disk to memory.

Teradata Workload Management facilitates tactical queries that possess the aforementioned attributes through a tactical workload group. This group reserves AWTs and assigns them exclusively for single-AMP accesses, ensuring tactical queries are promptly executed without waiting for AWT availability.

If a query does not perform as expected, it is immediately downgraded to a lower-priority workload group to safeguard valuable resources. Further details will be provided in this blog post.

We strive for a single AMP access query to ensure consistent execution time. Thus, obtaining a stable execution plan with accurate statistics is imperative.

How to Design Tactical Workload in Teradata

We must differentiate between retrieval steps and joining steps.

Optimizing retrieve steps is simpler than optimizing join steps. To achieve this, we require primary index access in the form of UPI, NUPI, or a single table join index. By ensuring primary index access, we can localize data rows within minimal blocks and maintain a consistent access time.

Joins are unique workloads that typically involve most or all AMPs. However, we can configure the workload to utilize nested and merge joins, which involve only a few AMPs. These are the only two join approaches that the Optimizer can limit to one or a few AMPs. The optimal choice is the nested join, which can serve as an initial step to filter down to a single row.

Single-AMP operations offer the added benefit of utilizing ROWHASH-level locking during retrieval, eliminating the need for more intrusive locking methods. This increases concurrency when multiple tactical queries concurrently access the same table.

Our main objective in designing tactical workloads is to acquire an execution plan primarily involving single-AMP or group-AMP steps rather than full table scans.

As previously stated, the primary index access is our key tool in each step. Thus, employing a database specialized for the application that submits tactical queries can often provide benefits. In doing so, we can model indexes as we require. However, this may not always be feasible. In my experience, the same database is frequently utilized for tactical and strategic workloads, and the option to freely select the primary index is unavailable. Nevertheless, creating direct access is still plausible with Join Index or secondary indexes.

One effective technique is to create a table join index that functions as a “hashed” NUSI. This approach offers both the advantages of direct access and the flexibility of a non-unique index.

In row-partitioned tables, the partition columns must be included in the primary index to guarantee that a row is searched in a single partition.

Tactical Workload Exceptions as Tuning Help

To achieve peak performance, Teradata must accurately identify tactical workloads and ensure queries are processed exclusively in the appropriate tier without prematurely being downgraded to a lower-priority tier.

To prevent a single inefficient query from causing system-wide issues, Teradata has implemented security measures within the tactical workload tier. These measures, known as “Tactical Workload Exceptions,” monitor CPU usage and IO consumption and set specific limits. Queries that exceed these limits are automatically moved to a lower-priority workload.

We can set limits for IO and CPU usage for all combined nodes or individual nodes.

The priority scheduler manages the limits per node (as an independent computer), and TASM controls the summed-up limits.

Teradata sets default limits for the tactical workload as follows:

- Maximum 2 CPU seconds per node

- Maximum 200 megabytes per node

For the overall limits, we need to multiply these numbers by the total number of nodes.

Although it must be done cautiously due to the potentially significant impact on the system’s performance, it is possible to modify these limits.

The limits per node only affect the node which exceeds the limit. That is, only on specific nodes may the AMP worker tasks of a query be moved from the tactical tier to one with lower priority. This will happen if the query has high skew and one or a few AMPs have most of the load.

Conversely, universal limits are enforced across all nodes. If the aggregate limit, such as the number of CPU seconds, is surpassed, the workload will be given lower priority on all nodes.

Tuning with Teradata Workload Exceptions

To determine whether a tactical workload exception has downgraded a query, the DBC.DBQLOGTBL table can provide this information. A tactical workload exception has occurred if the WDID and FinalWDID values differ. The TacticalCPUException and TacticalIOException columns indicate the number of nodes that threw an exception in this scenario.

Utilize the query log to create a tactical workload and verify its movement within the tactical tier. Avoid relying solely on run times. Additionally, review the DBC.DBQLOGTBL table to determine the number of AMPs utilized in each step.

Final Considerations

It is crucial to supplement tuning measures with workload management to support tactical queries and avoid blocking. Object locks (LOCKING FOR ACCESS) can effectively reduce blocking. Timely execution of tactical workload is essential to prevent workload delays. Optimized tactical queries should not cause delays for the Teradata user. End-users are unlikely to distinguish the reason for delayed results and may not be concerned about it.

Related Services

⚡ Need Help Optimizing Your Data Platform?

We cut data platform costs by 30–60% without hardware changes. 25+ years of hands-on tuning experience.

Explore Our Services →📋 Considering a Move From Teradata?

Get a personalized migration roadmap in 2 minutes. We have migrated billions of rows from Teradata to Snowflake, Databricks, and more.

Free Migration Assessment →

Nice article, thanks. The “special trick” was interesting! We use dedicated database for web-service client/purpose. A stored proc runs periodically and re-structures source data to fit the definition of table/index designed as needed. The single/few AMP-query is packaged in a macro with input parameters. This to restrict the client (allow only execute macro). A dedicated TD UserName is created for the purpose.

Tip: You should pay attention to the workload setting, the initial priority given to the query/user, and the time allowed before given reduced priority.

You should set requirements on the client-side environment. Let’s say it is a MS web server component using the Teradata .NET Data Provider with default settings, connection pooling is on, and it runs on a clustered 4 node web server. The default max pool size is 100 (seen on version 16). From all 4 nodes on a very busy day, that could result in 400 sessions from one tactical client. Say you support several such client services on one Teradata system, and do the math. Then ask your TD DBA how many sessions and AWTs are available at hot hours. Probably you would require the client to set a relatively short query response timeout value depending on your use-case (range around 6 to 20 sec maybe?), connection pooling is beneficial, but restrict the size depending on your situation: A max pool size of 5 for a TD Data Provider that runs on 4 nodes means you allow 20 sessions. Go tactical! Best regards Arent