As a Teradata SQL specialist, I find skew to be a common challenge. In fact, approximately 90% of SQL performance difficulties stem from skew, based on my experience. However, this article focuses on the potential for minor modifications to yield significant improvements.

This article highlights the potential consequences of selecting inappropriate character sets for SQL performance, a commonly neglected aspect.

How it all began

While reviewing the QryLog table, I noticed a DELETE statement that was significantly impacting CPU usage and resulting in excessive IO operations. In fact, this statement caused more IOs than any other daily workload.

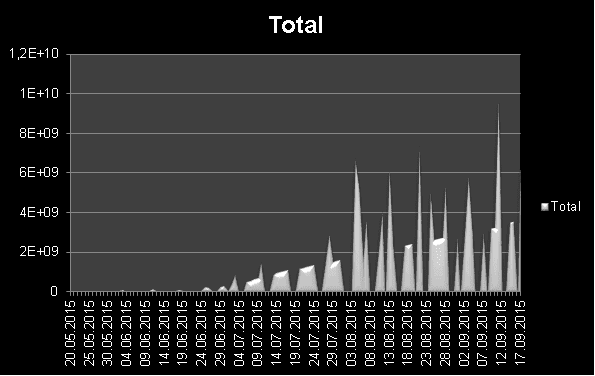

Moreover, resource usage appeared to be escalating rapidly. The chart below demonstrates that an average of 50 million rows were added daily.

The DELETE statement is executed on a massive table named “TheTable” with nearly 3 billion records. A subquery is utilized to identify the specific rows for deletion, with the table “TheVolatileTable” used for filtering, containing only one row.

WHERE (COL1, COL2, COL3, COL4) IN (SELECT COL1, COL2, COL3, COL4 FROM TheVolatileTable);

Below you can see the table definitions of both involved tables:

PK BIGINT NOT NULL GENERATED ALWAYS AS IDENTITY (START WITH 1 INCREMENT BY 1 MINVALUE -999999999999999999 MAXVALUE 999999999999999999 NO CYCLE),

Col1 VARCHAR(3) CHARACTER SET LATIN NOT CASESPECIFIC,

Col2 VARCHAR(1) CHARACTER SET LATIN NOT CASESPECIFIC,

Col3 DATE FORMAT ‘YYYY-MM-DD’,

Col4 CHAR(12) CHARACTER SET LATIN NOT CASESPECIFIC,

RefDat DATE FORMAT ‘YYYY-MM-DD’

) PRIMARY INDEX (PK)

PARTITION BY RANGE_N(RefDat BETWEEN ‘2010-01-01’ AND DATE + INTERVAL ‘365’ DAY EACH INTERVAL ‘1’ DAY, NO RANGE, UNKNOWN);

SELECT CAST(COUNT(*) AS BIGINT) FROM TheDatabase. TheTable;

Count(*)

2.928.780.765 –> almost 3 billion rows!

This table filters the rows to be deleted and contains only one row.

(

SELECT

‘CODE’ AS Col1,CASE WHEN Col5 = ‘X’ THEN ‘Y’ ELSE ‘N’ END AS Col2,

CAST(substr(TheDate,1,4) || ‘-‘ || substr(TheDate,5,2) || ‘-‘ || substr(TheDate,7,2) AS DATE FORMAT ‘YYYY-MM-DD’) AS Col3

,Col5 AS Col4

FROM DummyTable GROUP BY 1,2,3,4

) WITH DATA ON COMMIT PRESERVE ROWS ;

SELECT COUNT(*) FROM TheVolatileTable;

Count(*) 1 –> exactly 1 row

The Initial Situation

The volatile table is created using this syntax in the initial setup:

CREATE TABLE AS (<SUBQUERY>);Creating and populating tables in a single step is a convenient approach. However, the query’s author overlooked the significance of data types and character sets. Character columns on the affected Teradata system are generated with the UNICODE character set by default. Regrettably, the table “TheTable” specifies these character columns as a LATIN character set, resulting in a mismatch.

CREATE MULTISET VOLATILE TABLE TheVolatileTable

(

Col1 VARCHAR(3) CHARACTER SET UNICODE NOT CASESPECIFIC,

Col2 VARCHAR(1) CHARACTER SET UNICODE NOT CASESPECIFIC,

Col3 DATE FORMAT ‘YYYY-MM-DD’,

Col4 CHAR(12) CHARACTER SET UNICODE NOT CASESPECIFIC)

NO PRIMARY INDEX ON COMMIT PRESERVE ROWS;

The DELETE statement’s execution plan for poor performance appears as follows:

WHERE (COL1, COL2, COL3, COL4) IN

(

SELECT COL1, COL2, COL3, COL4 FROM TheVolatileTable

);

1) First, we lock a distinct TheDatabase.”pseudo table” for write

on a RowHash to prevent global deadlock for

TheDatabase. TheTable.

2) Next, we lock TheDatabase. TheTable for write.

3) We execute the following steps in parallel.

1) We do an all-AMPs RETRIEVE step from

TheDatabase. TheTable by way of an all-rows scan

with no residual conditions into Spool 2 (all_amps)

(compressed columns allowed) fanned out into 50 hash join

partitions, which is built locally on the AMPs. The size of

Spool 2 is estimated with high confidence to be 2,928,780,765

rows (123,008,792,130 bytes). The estimated time for this

step is 32.77 seconds.

2) We do an all-AMPs RETRIEVE step from

TheVolatileTable by way of an all-rows scan with no

residual conditions into Spool 4 (all_amps), which is

redistributed by the hash code of (

TheVolatileTable. COL4,

TheVolatileTable. COL3,

TheVolatileTable. COL2,

TheVolatileTable. COL1) to all AMPs. Then

we do a SORT to order Spool 4 by the sort key in spool field1

eliminating duplicate rows. The size of Spool 4 is estimated

with high confidence to be 2 rows (186 bytes). The estimated

time for this step is 0.02 seconds.

4) We do an all-AMPs RETRIEVE step from Spool 4 (Last Use) by way of

an all-rows scan into Spool 3 (all_amps) (compressed columns

allowed) fanned out into 50 hash join partitions, which is

duplicated on all AMPs. The size of Spool 3 is estimated with

high confidence to be 780 rows (72,540 bytes).

5) We do an all-AMPs JOIN step from Spool 2 (Last Use) by way of an

all-rows scan, which is joined to Spool 3 (Last Use) by way of an

all-rows scan. Spool 2 and Spool 3 are joined using a inclusion

hash join of 50 partitions, with a join condition of (

“((TRANSLATE((COL1)USING LATIN_TO_UNICODE))=

COL1) AND (((TRANSLATE((COL2)USING

LATIN_TO_UNICODE))= COL2) AND ((COL3 = COL3) AND

((TRANSLATE((COL4)USING LATIN_TO_UNICODE))= COL4)))”).

The result goes into Spool 1 (all_amps), which is redistributed by

the rowkey of (TheDatabase. TheTable. ROWID) to all

AMPs. Then we do a SORT to partition Spool 1 by rowkey and the

sort key in spool field1 eliminating duplicate rows. The size of

Spool 1 is estimated with low confidence to be 12,253 rows (

220,554 bytes). The estimated time for this step is 0.50 seconds.

6) We do an all-AMPs MERGE DELETE to

TheDatabase. TheTable from Spool 1 (Last Use) via the

row id. The size is estimated with low confidence to be 12,253

rows. The estimated time for this step is 1.87 seconds.

7) Finally, we send out an END TRANSACTION step to all AMPs involved

in processing the request.

-> No rows are returned to the user as the result of statement 1.

The execution plan has multiple issues. Primarily, the join columns in the large table “TheTable” necessitate conversion from LATIN to UNICODE. This conversion must be performed for every row, totaling 3 billion times.

This operation is costly in terms of CPU usage.

Moreover, the large table is partitioned into 50 hash partitions to facilitate the sequential join. Spooling this table with billions of rows requires considerable resources — an approach forced by the need to handle the character set conversion.

Below you can see the amount of IOs and CPU seconds being used:

| TotalIOCount | AMPCPUTime | SpoolUsage |

| 3.585.081.549,00 | 71.510,38 | 1.397.760,00 |

The Tuning Approach

The query tuning was straightforward yet impactful. I generated the volatile table manually and selected the LATIN character set to align with the character sets in both tables.

(

Col1 VARCHAR(3) CHARACTER SET LATIN NOT CASESPECIFIC,

Col2 VARCHAR(1) CHARACTER SET LATIN NOT CASESPECIFIC,

Col3 DATE FORMAT ‘YYYY-MM-DD’,

Col4 CHAR(12) CHARACTER SET LATIN NOT CASESPECIFIC

) PRIMARY INDEX (Col1, Col2, Col3, Col4) ON COMMIT PRESERVE ROWS;

You can view the enhanced execution plan below.

WHERE (COL1, COL2, COL3, COL4) IN

(

SELECT COL1, COL2, COL3, COL4 FROM TheVolatileTable

);

1) First, we lock a distinct TheDatabase.”pseudo table” for write

on a RowHash to prevent global deadlock for

TheDatabase. TheTable.

2) Next, we lock TheDatabase. TheTable for write.

3) We do an all-AMPs RETRIEVE step from TheVolatileTable by

way of an all-rows scan with no residual conditions into Spool 3

(all_amps), which is built locally on the AMPs. Then we do a SORT

to order Spool 3 by the sort key in spool field1 (

TheVolatileTable. Col1,

TheVolatileTable. Col2,

TheVolatileTable. Col3,

TheVolatileTable. Col4) eliminating duplicate rows.

The size of Spool 3 is estimated with high confidence to be 2 rows

(124 bytes). The estimated time for this step is 0.02 seconds.

4) We do an all-AMPs RETRIEVE step from Spool 3 (Last Use) by way of

an all-rows scan into Spool 2 (all_amps) (compressed columns

allowed), which is duplicated on all AMPs. The size of Spool 2 is

estimated with high confidence to be 780 rows (48,360 bytes).

5) We do an all-AMPs JOIN step from TheDatabase. TheTable

by way of an all-rows scan with no residual conditions, which is

joined to Spool 2 (Last Use) by way of an all-rows scan.

TheDatabase. TheTable and Spool 2 are joined using a

inclusion dynamic hash join, with a join condition of (

“(TheDatabase. TheTable. Col1 =

Col1) AND ((TheDatabase. TheTable. Col2

= Col2) AND ((TheDatabase. TheTable. Col3 =

Col3) AND (TheDatabase. TheTable. Col4

=Col4)))”). The result goes into Spool 1 (all_amps), which

is built locally on the AMPs. Then we do a SORT to partition

Spool 1 by rowkey and the sort key in spool field1, eliminating

duplicate rows. The size of Spool 1 is estimated with low

confidence to be 12,253 rows (220,554 bytes). The estimated time

for this step is 13.06 seconds.

6) We do an all-AMPs MERGE DELETE to

TheDatabase. TheTable from Spool 1 (Last Use) via the

row id. The size is estimated with low confidence to be 12,253

rows. The estimated time for this step is 1.87 seconds.

7) Finally, we send out an END TRANSACTION step to all AMPs involved

in processing the request.

-> No rows are returned to the user as the result of statement 1.

The enhanced execution plan utilizes dynamic hash joins, which do not require spooling the 3 billion rows. Instead of spooling to rehash join columns, the Teradata Optimizer calculates the row hash “on the fly” for each row.

This minor adjustment significantly decreased resource consumption.

| IO Count | CPU Time | SpoolUsage | |

| Tuned | 1.335.246,00 | 2.916,23 | 599.040,00 |

| Original | 3.585.081.549,00 | 71.510,38 | 1.397.760,00 |

| Reduced to: | 0,04% | 4,08% | 42,86% |

Impressive statistics show a drastic decrease in IOs by 99.96%, CPU seconds by approximately 96%, and a 50% reduction in spool usage.

This successful tuning demonstrates the possibility of making significant enhancements with minimal effort. It is imperative to identify and prioritize the optimization of your most problematic workload. Consequently, improving your most inefficient queries should be among your primary objectives.

Related Services

⚡ Need Help Optimizing Your Data Platform?

We cut data platform costs by 30–60% without hardware changes. 25+ years of hands-on tuning experience.

Explore Our Services →📋 Considering a Move From Teradata?

Get a personalized migration roadmap in 2 minutes. We have migrated billions of rows from Teradata to Snowflake, Databricks, and more.

Free Migration Assessment →