Introduction to Teradata Referential Integrity

What is Teradata Referential Integrity?

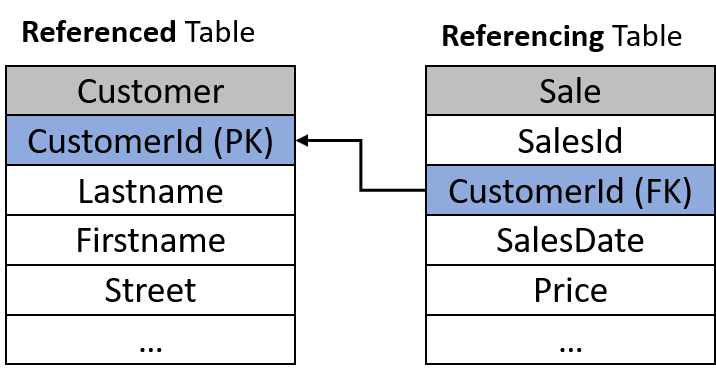

Referential integrity ensures that a value created in a foreign key (FK) column of a referencing (child) table also exists as a primary key (PK) in the referenced (parent) table. The parent table’s column does not have to be specified explicitly as PK in Teradata, but an FK can reference each unique NOT NULL column.

What are the Reasons for using Teradata Referential Integrity Features?

1. Ensure data integrity and consistency by preventing that DELETE, UPDATE, or INSERT statements can corrupt the database

2. No coding is required to ensure referential integrity manually

3. Teradata referential integrity handling is optimized and performs better than programming it yourself using SQL or another coding method.

4. Referential Integrity helps the Teradata optimizer to create better execution plans by eliminating unnecessary joins.

What are the requirements for the primary key column of the parent table?

This column must be UNIQUE. This is given if a column is either defined as a primary key or has a UNIQUE constraint or a unique index (UPI, USI).

What happens if I delete a Primary Key value from the parent table and the child table refers to this value?

Teradata prevents this. A Primary Key value can only be deleted or changed if no Foreign Key refers to this value.

What is the syntax for referential integrity?

CREATE TABLE Sale

(

SalesId INTEGER NOT NULL

CustomerId INTEGER REFERENCES Customer (CustmerId),

SalesDate DATE,

Price DECIMAL(10,2))

PRIMARY INDEX (SalesId)

);

Can the foreign and primary key columns of the child and parent tables have different data types?

No. Both data type and case sensitivity must be identical.

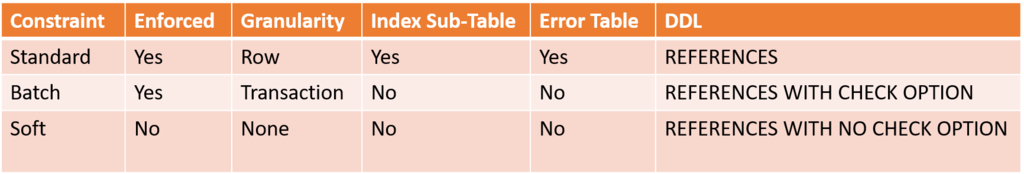

Teradata implements 3 Types of Referential Integrity.

1. What is Standard Referential Integrity?

The Standard Referential Integrity checks every Row INSERT, DELETE, or UPDATE immediately to ensure referential integrity.

A reference index sub-table is required for referential integrity.

Violation of referential integrity results in the failure of execution and generates an error report.

Because the referential integrity check is conducted on a row-by-row basis, a reduction in performance can be anticipated.

The tools TPT, Fastload, and Multiload are incompatible with tables with standard Referential Integrity constraints.

The Reference Index Sub-Table

Each referential constraint on a child table (the one with the FK) necessitates a reference index sub-table.

The reference index sub-table verifies if an UPDATE, INSERT, or DELETE operation violates referential integrity and hinders its execution.

The reference index sub-table is distributed among all AMPs through hashing, similar to a base table.

The foreign key value determines the rowhash, which guarantees that the index rows of the foreign key are consistently located in the same AMP as the primary key rows, whether they are designated by UPI, USI, or explicitly as PK.

Hashing the foreign key guarantees local referential integrity checks on each AMP without row duplication, a crucial performance element.

A reference index row includes the subsequent data:

- The ROWID of the foreign key value

- The foreign key value itself

- The number of rows in the child table containing this foreign key value

- The validity flag indicates if the foreign key value is valid (i.e., the value is available in the parent table column). The following section will discuss how referential integrity may not be given, even if defined.

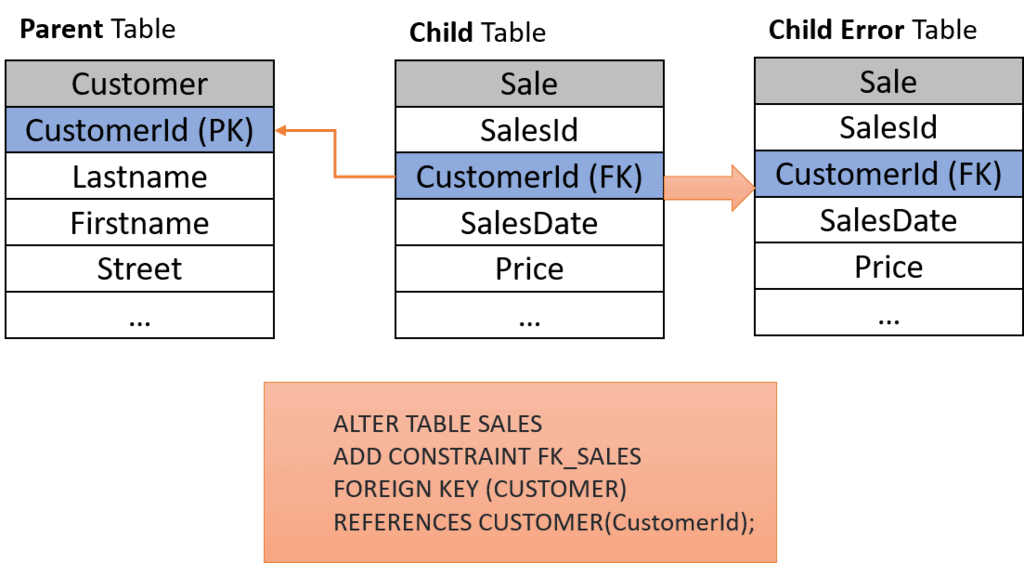

Adding Standard Referential Integrity to a populated Table – the Creation of Referential Integrity Violations

Standard referential integrity can be applied to a table that already has data.

ALTER TABLE SALE ADD CONSTRAINT FK_SALES FOREIGN KEY (CUSTOMER) REFERENCES CUSTOMER(CustomerId);

If a specific foreign key value has no primary key in the parent table, the following will occur:

- The validity flag of the reference index sub-table row is set to “false”

- INSERT, UPDATE, or DELETE statements can still be executed, even if there are invalidated index sub-table rows, i.e., the problem with the referential integrity in the child table.

- No new rows with the same invalid value can be inserted!

- An error table with the same structure as the child table is created automatically, receiving invalid rows. The error table is called exactly like the child table, but with a numeric postfix, e.g., for SALE, there is the error table SALE_0 being created.

- If there are several foreign key constraints on a child table, an error table is created for each foreign key constraint (these are numbered accordingly).

Purge Referential Integrity Violations

As demonstrated earlier, applying standard referential integrity to a populated table may result in unfulfilled integrity for certain rows.

Here are the methods to restore data integrity after implementing referential integrity checks:

ALTER TABLE SALE

ADD CONSTRAINT FK_SALES FOREIGN KEY (CUSTOMER)

REFERENCES CUSTOMER(CustomerId);- UPDATE SALE SET CustomerId = <ValidCustomerId> WHERE CustomerId = <InvalidCustomerId>;

- UPDATE SALE SET CustomerId = NULL WHERE SalesId IN (SELECT SalesId FROM SALES_0);

- DELETE FROM SALE WHERE SalesId IN (SELECT SalesId FROM SALES_0);

- INSERT INTO Customer VALUES (<MissingCustomerId>,…);

The syntax for adding Standard Referential Integrity

ALTER TABLE SALE

ADD CONSTRAINT SalesFK FOREIGN KEY (CustomerID)

REFERENCES Customer (CustomerId);2. What is Batch Referential Integrity?

Unlike standard referential integrity, which checks row-by-row, this type of integrity examines the entire transaction. If any row fails the test, the entire transaction is rolled back.

This method offers the advantage of significantly lower performance loss, as Teradata does not require an index sub-table for Batch Referential Integrity.

The loading tools TPT, Fastload, and Multiload are incompatible with Batch Referential Integrity tables, just like with Standard Referential Integrity.

The transaction’s referential integrity is verified by joining the parent and child tables, as no reference index sub-table is utilized.

The entire transaction will be rolled back if a row violates referential integrity.

Adding Batch Referential Integrity to a Populated Table – the Creation of Referential Integrity Violations

If the foreign key contravenes populated tables, the ALTER TABLE operation will terminate with Error 3513, and no error table will be generated.

The syntax for adding Batch Referential Integrity – WITH CHECK OPTION

ALTER TABLE SALE ADD CONSTRAINT SalesFK FOREIGN KEY (CustomerID) REFERENCES WITH CHECK OPTION Customer (CustomerId);

3. What is Soft Referential Integrity?

Soft referential integrity does not guarantee the enforcement of referential integrity despite its definition between tables.

Soft referential integrity helps the optimizer generate superior execution plans without the added burden of batch or standard referential integrity.

Loading tools such as Fastload, TPT, and Multiload can be used without any restrictions, as no checks are performed.

By implementing Soft Referential Integrity, you ensure data integrity. It is imperative to note that failing to do so may result in incorrect query results. The optimizer relies on this assurance.

The syntax for adding Batch Referential Integrity – WITH NO CHECK OPTION

ALTER TABLE SALE

ADD CONSTRAINT SalesFK FOREIGN KEY (CustomerID)

REFERENCES WITH NO CHECK OPTION Customer (CustomerId);Referential Integrity in Comparison

Now, let’s compare a query over two tables with referential integrity and analyze how join optimization occurs and the outcome based on the type of referential integrity employed.

We select only the columns of the child table (Sale) so that the optimizer can do join elimination.

SELECT s.* FROM Customer c INNER JOIN Sale s ON s. CustomerId = c. CustomerId;

No Referential Integrity is defined.

A join occurs consistently, but solely the columns from the sale table are chosen.

Rows with a CustomerId in the Sale Table that is not present in the Customer Table are discarded.

Standard Referential Integrity is defined

ALTER TABLE SALE

ADD CONSTRAINT SalesFK FOREIGN KEY (CustomerID)

REFERENCES Customer (CustomerId);No joining occurs.

Retrieve rows from the Sale Table where the CustomerId is not present in the Customer Table. It is important to note that this discrepancy may have arisen due to the implementation of referential integrity on a pre-existing and populated table.

Reviewing the error table for any rows and addressing any errors is important to prevent unexpected outcomes.

Batch Referential Integrity is defined.

ALTER TABLE SALE

ADD CONSTRAINT SalesFK FOREIGN KEY (CustomerID)

REFERENCES WITH CHECK OPTION Customer (CustomerId);No joining occurs.

Batch referential integrity ensures no unexpected results by preventing violations of referential integrity.

Soft Referential Integrity is defined.

ALTER TABLE SALE

ADD CONSTRAINT SalesFK FOREIGN KEY (CustomerID)

REFERENCES WITH NO CHECK OPTION Customer (CustomerId);No joining occurs.

Return the rows from the Sale Table where the CustomerId is not present in the Customer Table.

When to use what kind of Referential Integrity?

Performance Considerations

- Soft Referential Integrity does not cause performance deterioration. Use it if you are sure that your data’s referential integrity is ensured to help the optimizer create a better execution plan.

- If a row-by-row INSERT, UPDATE, or DELETE occurs, the performance loss is similar for Standard and Batch Referential Integrity. Use it when mainly single rows are processed.

- Suppose many rows are processed at once with INSERT/DELETE/UPDATE. If no referential integrity violations exist, the performance loss is usually higher with Standard Referential Integrity than with Batch Referential Integrity Integrity.

- If many rows are processed at once with INSERT/DELETE/UPDATE, and referential integrity violation occurs frequently, the rollback caused by the Batch Referential Integrity might cause substantial performance issues.

- Use batch referential integrity for batch inserts of rows only if there are usually no referential integrity violations, as the rollback in case of an error might become a performance issue.

Feature Comparison

Teradata Referential Integrity – Why is it so rarely used?

In a data warehouse, complete referential integrity is not always crucial.

It is preferable to tolerate a certain degree of inaccuracy rather than endure the decrease in efficiency resulting from standard or batch referential integrity.

Soft referential integrity can be a viable solution, provided that the referential integrity of the data is fully satisfied.

The ETL process requires significant effort to ensure proper implementation. Soft referential integrity should only be utilized after this step to avoid erroneous query results.

Please refer to the additional reading material as well:

Teradata Documentation on this topic

Related Services

🏗️ Planning a Data Platform Migration?

Architecture-first approach: we design before a single line of code is written. Zero data loss across every migration delivered.

Our Migration Services →