Unfortunately, cost-cutting often results in clients saving in the wrong areas, initiating Data Warehouse projects prematurely.

As a Teradata Performance Optimization Analyst, you may encounter situations where the data model is not maintained, business specifications are unclear, and the mappings are incomplete and unyielding. Obtaining any information from the customer’s working environment should be considered fortunate.

Based on my experience, it is often impossible for you to take responsibility for your performance. Regrettably, this undertaking is frequently considered to be a solely technical responsibility.

While technical expertise may alleviate certain performance issues, it is unlikely to address the underlying causes of such problems, leaving you with numerous temporary solutions.

A purely technical strategy can reduce certain symptoms in the short run, but the total cost quickly becomes alarming upon evaluation. Regrettably, numerous companies practice a habit of ignoring this issue.

As a performance specialist, it is crucial to possess business analysis, data modeling, and development skills. This is because Performance Optimization frequently involves resolving issues with data models.

Your mission is to rectify previous shortcomings in all areas of expertise. Make an effort to reach out to individuals who were involved or accountable for these roles on behalf of the client.

Unfortunately, you may encounter uncooperative behavior when inquiring about someone’s area of expertise. This may occur when questioning their previous work results. Your mission is delicate, and having strong management support would likely alleviate some difficulties.

Rectifying a substandard data model resembles a journey through time. It requires returning to the project’s inception, comprehending the initial business specifications, and examining how they were converted into the present data model and its rationale. Adopting a mindset of scrutinizing all prior decisions is essential.

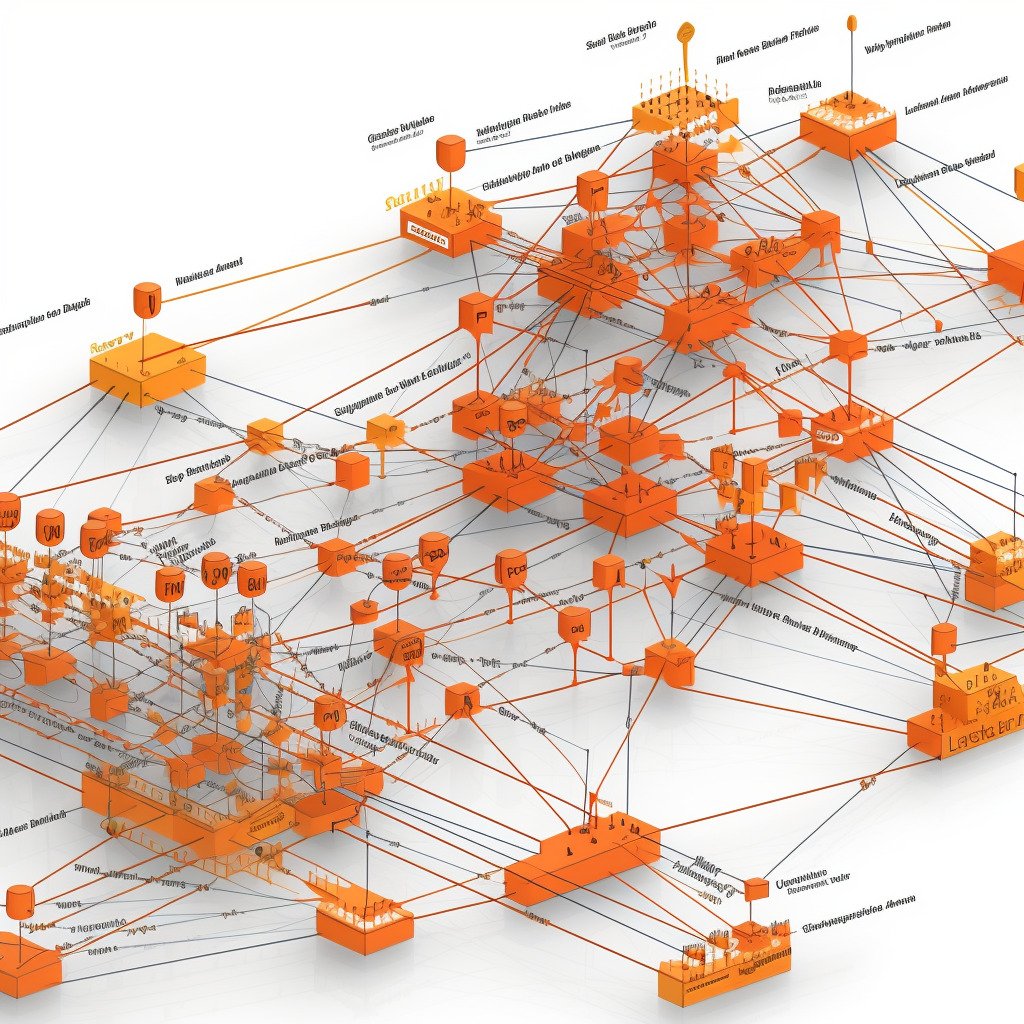

Projects often fail due to budget constraints, poor role assignments, lack of time, or inexperience. However, the primary culprit is the over-specialization of team members, resulting in diffuse responsibilities and wasted resources as individuals pass problems between one another.

If I know that only a data model redesign can address performance issues, I prefer to develop a concise prototype showcasing the enhancements.

I would revamp a measurable field and restructure its sequence. Employing a model as a means of communication can determine whether you have an opportunity to correct the issue or receive a response such as “It would be desirable, but our funds are insufficient”—the more concrete your strategy, the more effective.

Success must be measurable, and reporting is typically at the end of the chain. To demonstrate expertise in Teradata performance optimization, improving report performance on the prototype is a strong starting point.

I trust that the key points of this article are apparent:

Today, it is insufficient to be solely a specialized developer. To fulfill the role of a performance specialist, it is imperative to possess knowledge regarding the life cycle of data warehouses.

Related Services

🏗️ Planning a Data Platform Migration?

Architecture-first approach: we design before a single line of code is written. Zero data loss across every migration delivered.

Our Migration Services →