Isolation Levels and their Impact on Performance & Concurrency

Isolation is a crucial factor in determining the visibility of transaction integrity to database users. This property guarantees that concurrently executed transactions produce identical results to those executed sequentially. Nonetheless, relinquishing this requirement can enhance transaction concurrency, improving performance. However, this also implies accepting inconsistent outcomes.

Teradata provides varying degrees of isolation:

- Serialized

Read and write locks are used so that users only see consistent results but provide the lowest concurrency level and performance. - Read Committed

Teradata Load isolation is the topic of this blog post. Allows consistent reads while the data changes without causing a lock contention. - Read uncommitted

Dirty reads, users might see inconsistent results but high levels of concurrency and highest performance.

In the next chapter, we will describe how these isolation levels are used in Teradata.

Introduction to Teradata Load Isolation

Transaction management ensures that as many transactions as possible can concurrently access (SELECT) and change (UPDATE, INSERT, DELETE) the same database object. Data integrity is ensured by serializing the access and change operations on the same database object.

Consider the following scenarios:

- Transaction A wants to read from a table.

- Transaction B wants to update rows in the same table.

Depending on which transaction is served as the first, one of the below serial actions will take place:

- A gets the read lock on the table. B has to wait until A finishes retrieving the rows or

- B receives the write lock; A must wait until the update finishes.

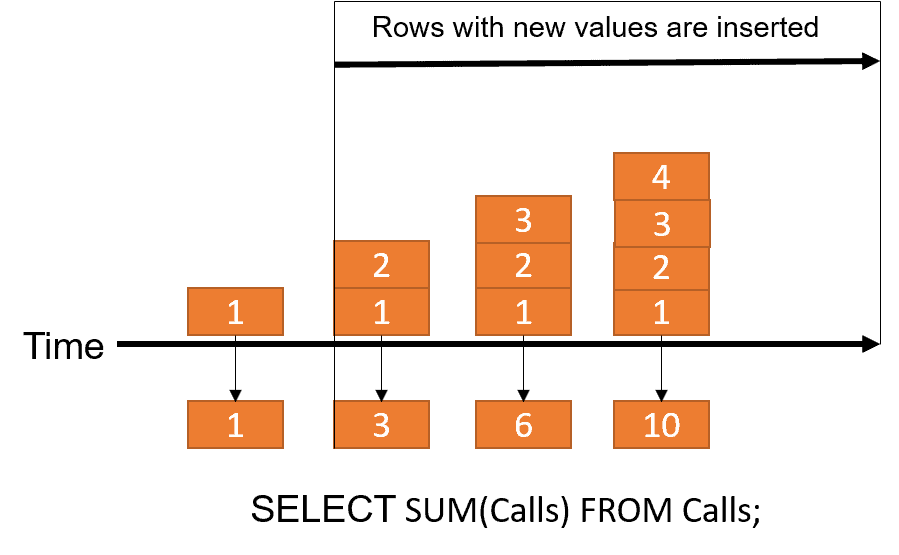

Before Teradata 15.10, there was already a method for a transaction to read from a table while a concurrent change operation occurs: the so-called dirty read; the dirty read allows the use of uncommitted rows. In the below figure, at each point in time, the uncommitted data can be read by users (which might be rolled back):

For our previously mentioned scenario, this feature would mean that A and B can take place simultaneously, but A might retrieve uncommitted rows. It will happen if the changing transaction B is rolled back after transaction A reads the rows. The dirty read is implemented in Teradata with the LOCKING FOR ACCESS modifier:

LOCKING TheTable FOR ACCESS

SELECT * FROM TheTable WHERE columnA = 10;

Teradata implements the access modifier on table and row levels, locking only one row or a small set of rows. Here is an example of the syntax for row-level locking:

LOCKING ROW FOR ACCESS

SEL * FROM TheTable

WHERE columnA = 10;

Keep in mind that Teradata transaction management may choose to upgrade the lock level if required (such as from row-level lock to table-level lock).

Access locking allows for higher performance and concurrency. It should be used whenever consistency is unnecessary, as read operations don’t have to wait for the change operations to finish and vice versa.

It can be ok to use access locking for highly aggregated reports but may be inadequate for frequently accessed tables that need 100% integrity.

Teradata Load isolation was introduced with Teradata 15.10 and allows us to use committed rows while another transaction changes them simultaneously. Load isolation adds data integrity to the functionality the LOCKING FOR ACCESS modifier already provides.

The main reason to use load isolation is the same for dirty reads: Performance improvement by increasing the workload concurrency.

Teradata implements load isolation on table level, and we can use it in our queries by adding the new LOAD COMMITTED modifier. In contrast, “locking for access” is applied only on the query level (in your SQL statement or view definition).

Teradata added several features to support load isolation:

- A new attribute is stored in the table header, identifying a table capable of load isolation.

- All table rows are versioned, using the internal “load id” column to uniquely find the different row versions.

- Each table with load isolation keeps the last committed “load id” values in the data dictionary.

The system only reads committed row versions using the “last committed load id” stored in the data dictionary. INSERT, UPDATE, and DELETE statements are implemented in the following way:

- INSERT: The new row is added with the new “load id.”

- UPDATE: A new version of the row is created (and identified by the new “load id”)

- DELETE: The row is not physically deleted. Only the “load id” of the DELETE statement is added (and kept together with the “load id” of the original INSERT). These logically deleted rows are not removed automatically from the table, but Teradata introduced a new ALTER TABLE feature to execute physical deletion manually.

Teradata Load isolation is only available for regular tables. VOLATILE, ERROR, QUEUE, TEMPORARY, or GLOBAL TEMPORARY TRACE tables cannot be defined as load isolated tables.

Furthermore, the following restrictions exist:

- Column partition tables cannot be load isolated.

- Hash indexes are not available for load isolated tables.

- A compressed join index is not allowed on a load isolated table.

- Permanent journaling is not available for load isolated tables.

- Load isolated tables cannot be part of a replication group.

Teradata Load Isolation and Indexing

Teradata Load isolation can be (or is automatic) inherited by the table’s secondary indexes and join indexes, allowing concurrent index reads of committed rows.

The USI of an isolated load table always carries the commit property of the base table row in the index row. We can use the following two statements to add the USI:

CREATE UNIQUE INDEX (PK) ON TheTable;

CREATE UNIQUE INDEX (PK) WITH LOAD IDENTITY ON TheTable;

A concurrent USI read can continue to get committed data via a single USI row access (no base table access needed).

If the NUSI of a load isolated table carries the commit property for the base table ROWIDs, the index alone can cover the query. Otherwise, the execution plan involves access to the base table. The below syntax defines behavior:

CREATE INDEX (PK) WITH LOAD IDENTITY ON TheTable; ON TheTable; -> Base table access can be avoided if NUSI is covering

CREATE INDEX (PK) WITH NO LOAD IDENTITY ON TheTable; -> Always requires a base table access

As Join indexes are pretty similar to regular permanent tables, any join index defined on the top of a regular table automatically becomes a load isolated join index. No special syntax for the join index DDL is required.

Usage of Load Isolated Tables

As load isolated tables need more permanent tablespace because:

- Versions of each row are stored and

- The necessary other information (load ids) consumes eight bytes per row.

We can temporarily turn off Teradata Load isolation to avoid versioning during a considerable change operation.

In this case, Teradata must apply exclusive locks to avoid readers using the LOAD COMMITTED modifier reading uncommitted rows.

Teradata Load isolation is applied during table creation:

CREATE TABLE TheTable, WITH CONCURRENT ISOLATED LOADING FOR ALL

(

PK INTEGER NOT NULL,

columnA INTEGER

) UNIQUE PRIMARY INDEX (PK);The FOR ALL level allows INSERT, DELETE, and UPDATE operations to be load isolated.

The FOR INSERT level is the right choice if it’s ensured that mainly INSERT operations occur on the load-isolated table. No logically deleted rows are kept, and no versions of updated rows. Read performance is better when compared against fully load-isolated tables.

If we want to turn off Teradata load isolation temporarily, we can use the FOR NONE level in the ALTER TABLE statement. We can change any permanent table from load isolated to regular and vice versa.

After we defined the load isolated table, it can be accessed by using the LOAD COMMITTED modifier:

CREATE VIEW TheTableV AS LOCKING TheTable FOR LOAD COMMITTED

SELECT * FROM TheTable WHERE columnA=10;

Read operations on this view will only return the last committed rows (identified by the system accessing the data dictionary).

If desired, the most recent – but potentially uncommitted – rows can be accessed from the load-isolated table TheTable by using the LOCKING FOR ACCESS modifier:

LOCKING TheTable FOR ACCESS

SELECT * FROM TheTable WHERE columnA=10;

Teradata Load Isolation Administrative Tasks

The system does not automatically delete logically deleted rows. A newly introduced ALTER TABLE statement has to be applied:

ALTER TABLE TheTable RELEASE DELETED ROWS;

Remember that this statement requires an exclusive lock on the load-isolated table. Additionally, we should refresh statistics after the cleanup.

Summary

Teradata Load isolation improves concurrency by retaining committed versions of table rows, which significantly advances Teradata’s transaction management. Notably, other database vendors, such as Oracle, had already incorporated the “committed read” method prior to Teradata’s implementation of this capability.

We appreciate the ability to define Teradata load isolation at a granular level for each table and the option to disable load isolation for operations that could significantly impact performance and disk usage.

I don’t like the absence of automatic garbage collection for deleted rows. Managing load-isolated tables to monitor deleted logical rows and table space usage seems like an unnecessary administrative task.

Join indexes necessitate implementing stored procedures for maintenance, whereas permanent tables can be conveniently managed via ALTER TABLE statements. It is anticipated that there will be progress in this regard in the future.

Refer to the Teradata documentation for additional information.

Related Services

🏗️ Planning a Data Platform Migration?

Architecture-first approach: we design before a single line of code is written. Zero data loss across every migration delivered.

Our Migration Services →

Excellent article and very useful!

Nice explanation for a complex topic.

Gracias Roland 🙂

Miguel