TPT Teradata – Introduction

Teradata experts are knowledgeable about the Teradata Parallel Transporter Utility (TPT) that integrates Fastload, Multiload, TPUMP, BTEQ, and Fastexport tasks into a unified application.

Teradata attempted to create a standardized tool called “The Teradata Warehouse Builder,” which failed to gain traction.

I don’t remember anyone using it, but Teradata Warehouse Builder scripts can be executed using TPT without any changes.

TPT offers a uniform syntax for all loading, updating, deleting, and extracting tasks and for executing Linux shell scripts and DDL statements. Conversely, the syntax for standalone utilities is frequently inconsistent.

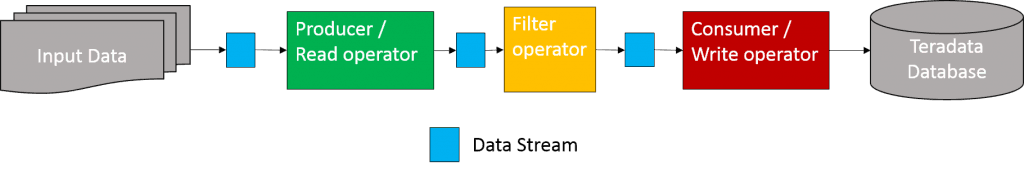

TPT for Teradata is based on the principles of data streams and operators.

Data streams cannot be accessed directly by scripts. They serve as pipelines connecting operators and are stored in the memory. No information is written on the disks.

Operators extract data from a source, such as a data stream, flat file, or ODBC connection. In addition, they can load data into a target like a table or flat file. Some operators have added functions, like creating or deleting tables. A detailed explanation of each type of operator will be presented later in this article.

To implement in-memory pipelining for file and load utilities without TPT, Linux pipes are the optimal choice.

A shell script can write a file to a named pipe from which a Fastload script can read simultaneously.

cat thefile > named_pipe &

cat named_pipe | fastload

TPT utilizes unique naming conventions and concepts in contrast to individual tools. Nevertheless, every conventional tool has a corresponding TPT operator. TPT combines all individual tools and includes supplementary functionalities.

TPT Teradata – Operators Overview

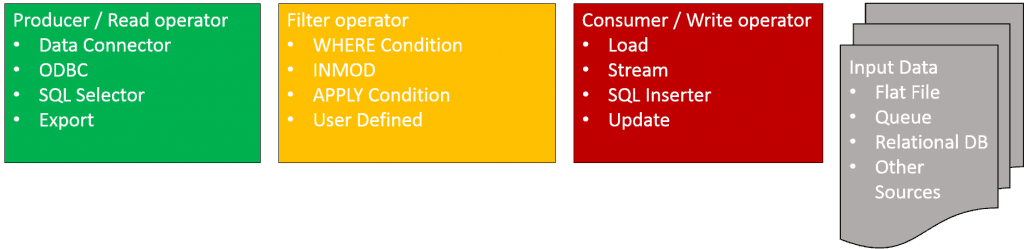

TPT operators can be classified into three groups: input operators (read), transformation operators (filter), and output operators (write).

Producers acquire data from various sources and transmit it via a data stream to consumers. They acquire this data from flat files, ODBC sources, SQL select statements, and export SQL.

Consumers retrieve data from a stream and store it in a specified table or flat file.

Producers and consumers can utilize access modules – software designed to retrieve data from various stores such as CDs, DVDs, and tape drives.

Users can choose from several access modules, such as Named Pipes for Unix, WebSphere MQ for IBM message queues, and JMS, or create and incorporate their own.

Distinguishing between consumers and producers may pose a challenge for novice Teradata users. To differentiate between the two, it may be useful to memorize the following:

Producers write only to a data stream, avoiding the target, while consumers write exclusively to the target without impacting the data stream.

Later, we will discuss the standalone operators connected through the data streams.

The image displays TPT’s extensive coverage of the ETL process.

The table below demonstrates how TPT replaces frequently used standalone utilities:

| TPT operator | Standalone utility | Task |

| DDL operator | BTEQ | Executes DDL, DCL, and self-contained DML SQL statements |

| Export operator | FastExport | Exports data from Teradata |

| Load operator | FastLoad | Loads an empty table in block mode |

| ODBC operator | OLE DB Access Module | Exports data from ODBC data source |

| OS Command operator | .OS command in BTEQ | Executes Linux commands |

| SQL Inserter operator | BTEQ | Transactionally inserts data into a Teradata table |

| SQL Selector operator | BTEQ | SQL SELECT from Teradata |

| Stream operator | Tpump | Transactionally loads Teradata tables |

| Update operator | MultiLoad | Updates, inserts, and deletes rows |

TPT differs from standalone utilities in terms of parallelism. While utilities have traditionally been used sequentially, TPT enables parallelism by sharing data streams and executing multiple operators simultaneously.

Two producer and consumer operators operate concurrently, with the former writing to a standard data stream and the latter reading from the data stream and writing to the Teradata database table. Building this configuration using standalone utilities would entail significant programming, such as Linux shell scripts. The following section will provide more information on the available operators and their integration into TPT operators.

The Producer Operators

Producer operators obtain data from a valid source and deliver it to consumer operators through a stream of information.

The Data Connector Operator (DATACONNECTOR PRODUCER):

The Data Connector Operator is bidirectional, functioning as a producer or a consumer.

When the type is DATACONNECTOR PRODUCER, a producer operator reads data from flat files or an access module, pushing the data into a data stream.

TPT reads data from a flat file using syntax resembling the “file=” statement in Fastload. Moreover, TPT can read numerous files in a directory matching a specified pattern, treating them as a single input file.

INMOD adapters can push data to the consumer operator from various sources, such as Fastload and Multiload INMOD, as well as flat files.

The Export Operator (EXPORT):

The operator replaces Fastexport by obtaining data from a Teradata table using a SELECT statement and creating a data stream. It does not create a flat file but instead streams the data to the designated output.

The SQL Selector Operator (SELECTOR):

This operator writes data to a data stream after executing a SQL SELECT statement, similar to the BTEQ export.

The ODBC Operator (ODBC):

The ODBC operator retrieves information from an ODBC data source and transfers it to a data stream.

Consumer Operators

Consumer operators retrieve and store data from a stream into a table, flat file, or access module. They solely read from data streams and then forward the obtained data to designated targets.

Consumers interact with their utilities.

The Consumer Data Connector Operator writes to a flat file when defined as a consumer, utilizing access modules as the target.

Load Operator (LOAD):

This operator provides block-level loading functionality similar to that found in Fastload.

Update Operator (UPDATE):

This operator offers enhanced block-level update functionality, similar to that of Multiload.

The Stream Operator (STREAM):

This operator implements TPUMP functionality.

The SQL Inserter Operator (INSERTER):

This operator performs the transactional BTEQ INSERT functionality.

The Fastexport OUTMOD (FASTEXPORT OUTMOD):

This operator allows for the usage of the Fastexport OUTMOD adapter.

Filter Operators

We filter the data stream using this operator.

TPT scripts allow users to call customized filters (written in C or C++), WHERE clauses, and CASE DML expressions within APPLY statements.

Standalone Operators

The OS Command Operator (OS COMMAND):

We utilize the OS operator to execute Linux commands, which supersedes the functionalities provided by the “.OS” command of BTEQ.

The DDL Operator (DDL):

This operator facilitates the execution of DDL statements, rendering it feasible to drop or create tables and indexes before data loading.

Despite its potentially misleading name, this SQL operator allows for executing statements that do not produce result sets.

We can use INSERT…SELECT, UPDATE, and DELETE statements.

The UPDATE operator is a more efficient option for deleting records than Multiload DELETE.

TPT surpasses standalone tools such as Fastload, Multiload, and BTEQ by combining their functionality into a single utility and adding support for parallelism and a unified syntax.

Related Services

🔧 Need Expert Database Administration?

Our team brings 25+ years of enterprise DBA experience across Teradata, Snowflake, and Oracle.

Meet Our Team →📋 Considering a Move From Teradata?

Get a personalized migration roadmap in 2 minutes. We have migrated billions of rows from Teradata to Snowflake, Databricks, and more.

Free Migration Assessment →

hi how does tpt update , updates the target table if there are no keys on either the source or target tables?

its really informative..a working example could have made it even better

There is a link in the article now pointing to example scripts.

Very nice intro to a set of powerful and oftentimes, not used enough, functionality. Very nicely done