What is a Teradata Query Band?

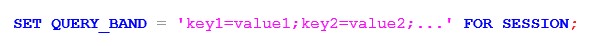

The DBQL tables provide essential optimization figures for queries run on a Teradata system. Given the high volume of daily queries, locating specific queries in the DBQL tables can be challenging. To address this issue, it is advisable to employ the Teradata query band feature to pinpoint queries. By enabling this feature (preferably for the entire session for improved performance), any key/value pair can be saved as text in the column “QueryBand” of DBC.DBQLOGTBL. The following syntax can be used to set a query band:

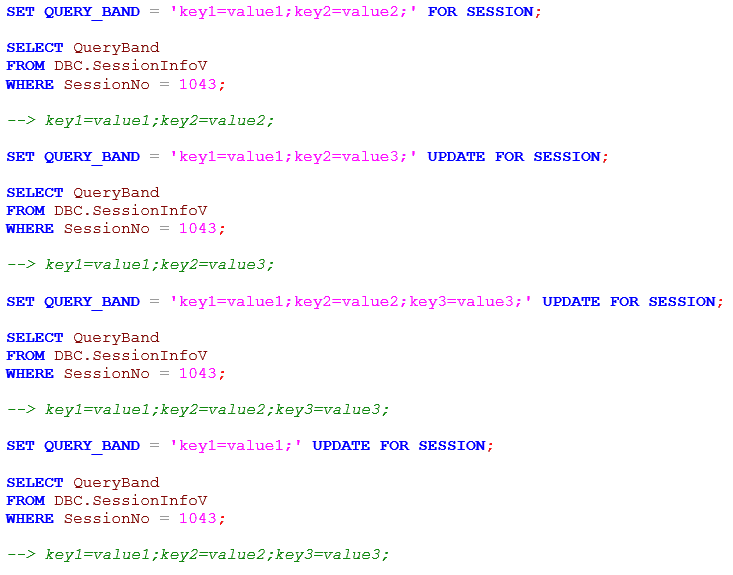

How can I change an existing Teradata Query Band?

It is possible to update a query band or add key/value pairs to an existing one. However, removing a key/value pair from a current query band in Teradata is not feasible. In such cases, the whole query band should be redefined. To see the query bands of all active sessions, refer to the DBC.SessionInfoV view.

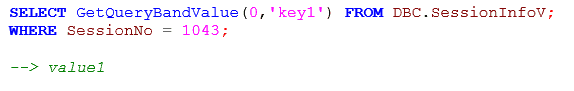

To quickly retrieve the key/value pairs from the “Queryband” column, use the GetQueryBandValue function. The function’s first parameter designates which query band to return if multiple bands exist (0 for the first, 1 for Transaction, and 2 for Session).

How Query Banding in Teradata can be used for Tuning

Assuming we have developed a more efficient SQL statement, it appears to take longer to execute when presented to a colleague. How can we confirm the validity of our optimization? Utilizing Teradata’s queryband feature, we can track the resource usage of each version of the query and compare the figures to gauge our progress accurately. This process involves wrapping the original and optimized queries in queryband tags to generate separate usage statistics. These statistics can then be efficiently read and documented for further analysis and presentation. The necessary steps to complete this process are as follows:

- We must ensure that our Teradata SQL Assistant session makes no other requests once we have set the query band.

- We need to set the Teradata query band for the unoptimized version of our SQL, run the SQL, and turn it off immediately, as shown below:

SET QUERY_BAND = ‘QUERY1=VS1;’ FOR SESSION;

Select * FROM CustomerView;

SET QUERY_BAND=NONE FOR SESSION;

- Next, we repeat the above two steps for our optimized query, using a different query band name, e.g. ‘QUERY1=VS2;’

- Finally, we can compare the result.

SELECT QueryBand, NumResultRows, NumSteps, TotalIOCount, AMPCPUTime, ParserCPUTime, NumOfActiveAMPs, MaxCPUAmpNumber, MinAmpIO,MAxAmPIO, MaxIOAmpNumber, SpoolUsage

FROM DBC.DBQLOGTBL

WHERE TRIM(QueryBand) LIKE ‘%QUERY1=%’

and QueryText LIKE ‘%SELECT%’

;

Teradata may require several minutes to transfer our activity from the cache to the DBC table. Thus, if step 4 produces no rows when executed promptly, we need not fret. To avert impatience among our intended audience, we should tailor our result presentation to accommodate this delay. We can use the FLUSH QUERY LOGGING WITH ALL statement to purge the query log cache instantly.

The importance of a measure depends on the focus and expectations of our tuning efforts. We currently possess concrete evidence of our success.

What to consider when creating a Query Band in Teradata

In a production setting, retaining pertinent variables such as application and job names, script versions, and loading dates in the query band is logical. This facilitates their accessibility in DBC.DBQLOGTBL for future analysis of performance.

For effective query band design, it is essential to implement a consistent structure that can facilitate analysis across the Teradata system’s workload.

A Performance Tuning Example with the Teradata Query Band

Reporting often involves aggregating data from a single date or a range of dates.

Assuming that the tables have start and end dates, a common join scenario involves the product table being linked with a calendar table and limited to the reporting date. An example is provided below:

SELECT cal.calendar_date as mydate, c.* FROM Client c INNER JOIN Sys_Calendar. CALENDAR cal ON cal.calendar_date BETWEEN c.hist_start_date AND c.hist_end_date WHERE mydate = DATE ‘2020-10-20’;

1) First, we lock a distinct “pseudo table” for read on a RowHash to prevent global deadlock for c. 2) Next, we lock c for read. 3) We do a single-AMP RETRIEVE step from SYS_CALENDAR.CALDATES in view CALENDAR by way of the unique primary index “SYS_CALENDAR.CALDATES in view CALENDAR.cdate = DATE ‘2020-10-20′” with no residual conditions into Spool 2 (all_amps) (compressed columns allowed), which is duplicated on all AMPs. The size of Spool 2 is estimated with high confidence to be 390 rows (6,630 bytes). The estimated time for this step is 0.01 seconds. 4) We do an all-AMPs JOIN step from Spool 2 (Last Use) by way of an all-rows scan, which is joined to c by way of an all-rows scan with a condition of (“(c.hist_end_date >= DATE ‘2020-10-20’) AND (c.hist_start_date <= DATE ‘2020-10-20’)”). Spool 2 and c are joined using a product join, with a join condition of (“(cdate >= c.hist_start_date) AND (cdate <= c.hist_end_date)”). The result goes into Spool 1 (group_amps), which is built locally on the AMPs. The size of Spool 1 is estimated with no confidence to be 1,641,096 rows (1,296,465,840 bytes). The estimated time for this step is 0.78 seconds. 5) Finally, we send out an END TRANSACTION step to all AMPs involved in processing the request. > The contents of Spool 1 are sent back to the user as the result of statement 1. The total estimated time is 0.78 seconds.

Our example query consumes the following resources: CPUTime and Disk I/Os.

TotalIoCount: 71.588,00

AMPCPUTime: 175,29

Replacing the Calendar Table with a Query Band

A lesser-known approach utilizes query banding to avoid joining with a calendar table. The reporting date is saved in the session’s query band to eliminate the need for a join.

SET QUERY_BAND = ‘mydate=2015-10-20;’ FOR SESSION; SELECT CAST(getquerybandvalue(0,’mydate’) AS DATE) AS mydate, c.* FROM Client c WHERE CAST(getquerybandvalue(0,’mydate’) AS DATE FORMAT ‘YYYY-MM-DD’) BETWEEN c.hist_start_date and c.hist_end_date AND mydate = DATE ‘2020-10-20’;

1) First, we lock a distinct “pseudo table” for read

on a RowHash to prevent global deadlock for c.

2) Next, we lock c for read.

3) We do an all-AMPs RETRIEVE step from c by way of an

all-rows scan with a condition of (“((SYSLIB.getquerybandvalue (0,

‘mydate’)(DATE, FORMAT ‘yyyy-mm-dd’))= DATE ‘2020-10-20’) AND

((c.hist_start_date <= (SYSLIB.getquerybandvalue (0,

‘mydate’)(DATE, FORMAT ‘YYYY-MM-DD’))) AND

(c.hist_end_date >= (SYSLIB.getquerybandvalue (0,

‘mydate’)(DATE, FORMAT ‘YYYY-MM-DD’))))”) into Spool 1

(group_amps), which is built locally on the AMPs. The size of

Spool 1 is estimated with no confidence to be 1,476,987 rows (

1,166,819,730 bytes). The estimated time for this step is 0.93

seconds.

4) Finally, we send out an END TRANSACTION step to all AMPs involved

in processing the request.

-> The contents of Spool 1 are sent back to the user as the result of

statement 1. The total estimated time is 0.93 seconds.TotalIoCount: 57.418,00

AMPCPUTime: 581,99

Using query banding reduces disk IOs by 20%, but increases CPU usage by 330%. The optimal choice for your environment depends on whether performance issues stem from CPU or I/O limitations.

The quantity of comparisons needed for the product join rises exponentially with the number of days on the calendar being joined. Incorporating multiple dates into the query band amplifies the superiority of the second solution by decreasing disk I/Os.

Teradata Queryband Official Documentation

Related Services

🔧 Need Expert Database Administration?

Our team brings 25+ years of enterprise DBA experience across Teradata, Snowflake, and Oracle.

Meet Our Team →📋 Considering a Move From Teradata?

Get a personalized migration roadmap in 2 minutes. We have migrated billions of rows from Teradata to Snowflake, Databricks, and more.

Free Migration Assessment →

–Rather than using the LIKE in the predicate, you could use the built-in query band functions for a more flexible and extensible solution, here’s a slightly more involved example to illustrate:

–e.g.

— Intialise the QB for business date 2014-11-01

SET QUERY_BAND = ‘QUERY1=VS1;Bus_Date=2014-11-01;’ FOR SESSION ;

— Run the before SQL for business date 2014-11-01.

SELECT blah, blah…WHERE my_bus_date = DATE’2014-11-01′;

— Update the QB for another bus_date

SET QUERY_BAND = ‘QUERY1=VS1;Bus_Date=2014-11-02;’ UPDATE FOR SESSION ;

— Run the before SQL for business date 2014-11-02.

SELECT blah, blah…WHERE my_bus_date = DATE’2014-11-02′

— Update the QB for the tuned (after) queries

SET QUERY_BAND = ‘QUERY1=VS2;Bus_Date=2014-11-01;’ UPDATE FOR SESSION ;

— Run the before SQL for business date 2014-11-01.

SELECT blah, blah…WHERE my_bus_date = DATE’2014-11-01′;

— Update the QB for another bus-date

SET QUERY_BAND = ‘QUERY1=VS1;Bus_Date=2014-11-02;’ UPDATE FOR SESSION ;

— Run the after SQL for business date 2014-11-02.

SELECT blah, blah…WHERE my_bus_date = DATE’2014-11-02′

–Reset the QB

SET QUERY_BAND = NONE;

— Now go get the results – the point of the example:

SELECT GetQueryBandValue(t1.queryband,0,’QUERY1′)

,GetQueryBandValue(t1.queryband,0,’Bus_Date’)

,t1.*

FROM dbc.dbqlogtbl as t1

WHERE CAST(GetQueryBandValue(t1.queryband,0,’Bus_Date’) AS DATE)

BETWEEN date ‘2014-11-01’

AND date ‘2014-11-03’

AND t1.QueryBand IS NOT NULL;

Now you have many more options than what the LIKE clauses can enable.

Also check out:

SYSLIB.GetQueryBandSP

SYSLIB.GetQueryBandValueSP

SYSLIB.QueryBandReservedName

SYSLIB.GetQueryBand

SYSLIB.GetQueryBandPair

in addition to SYSLIB.GetQueryBandValue