Welcome to the second part of the Teradata performance optimization series. In this session, we will examine a primary culprit responsible for performance problems in a Teradata Data Warehouse system (though it likely applies to any Data Warehouse).

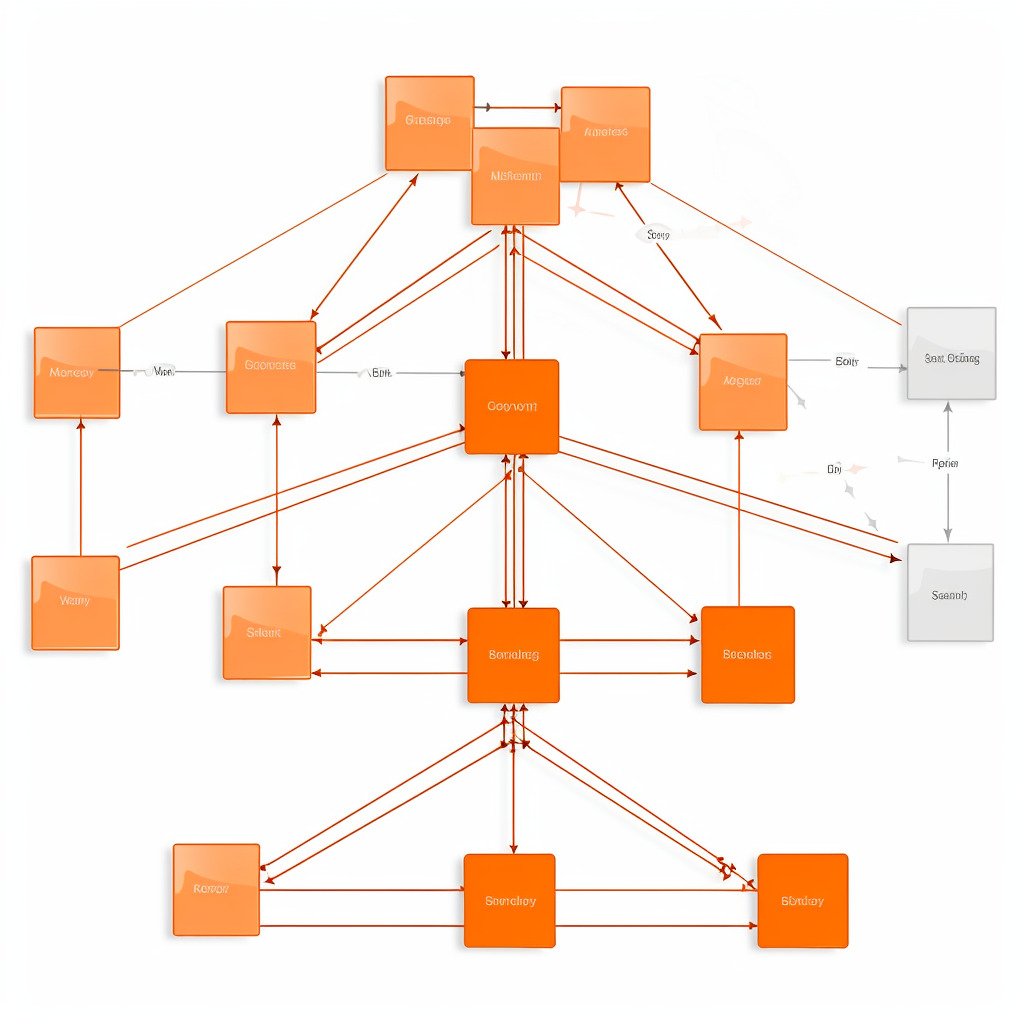

A sound data model can prevent potential performance problems in the early stages of a Data Warehouse project.

Most projects fail or are subpar due to clients with budget constraints. A well-designed data model requires investment but may not yield immediately visible outcomes.

Skilled data modelers are rare. Hiring a developer proficient in efficiently loading data into the database is more cost-effective than employing a data modeler who merely draws diagrams.

The appeal of this approach lies in the prompt availability of initial outcomes and the lower cost of employing developers compared to other positions within the data warehouse job hierarchy. In a short period of time, you can deliver favorable results for your client, which will lead to their satisfaction and confidence that their investment has not been wasted.

During the global economic downturn, the data warehousing sector required a marketing term to describe the decreased quality approach caused by daily rate reductions.

Prototyping was the new buzzword.

While prototyping is a good idea in principle, it often becomes the final solution. Prototypes were originally intended as a communication tool between customers and a base for requirement specification and further analysis. However, they frequently become both the final solution and a dead end.

Making direct changes to the source definitions of operational systems during the modeling process is perhaps the most ill-advised decision that could be made.

Integrating source systems 1:1 leads to operational data stores but has nothing to do with data warehousing. Combine this with the waiving of surrogate keys, and you can be sure that sooner or later, a source system will be replaced by another one. At that point, a cost-intensive redesign will be waiting for you.

The costs of the project have been postponed and are likely to be significantly higher than the initial expenses incurred by hiring a skilled data modeler.

Regrettably, this is the era in which we exist, and we must find a way to cope with this circumstance.

I chose to withdraw from participating in substandard data warehousing projects. My recommendation is to adopt the same approach — abstain from competing with others for diminishing daily rates. Instead, wait for unsuccessful ventures to be brought to you.

The following part in this series will explore options for repairing a flawed data model.

Related Services

🏗️ Planning a Data Platform Migration?

Architecture-first approach: we design before a single line of code is written. Zero data loss across every migration delivered.

Our Migration Services →