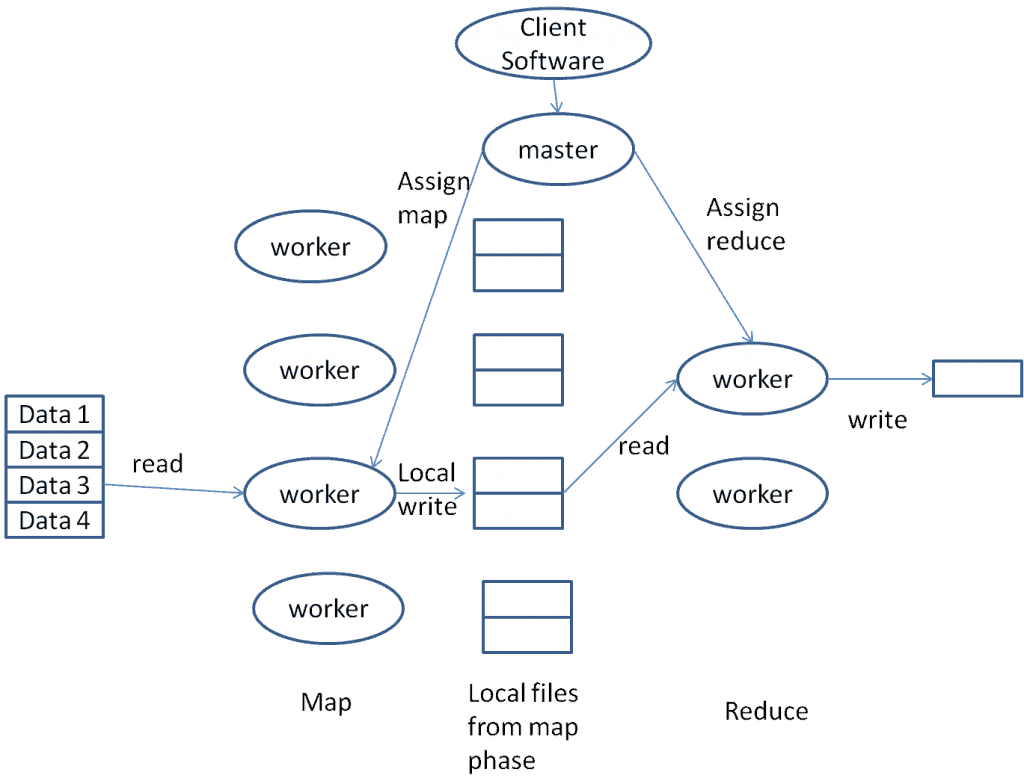

Here is an illustration depicting the design of real-world map-reduce implementations, such as Hadoop:

The input files reside in a distributed file system, such as HDFS for Hadoop, or GFS as Google calls it.

Worker processes handle mapper or reducer tasks.

Mappers read data from HDFS, apply the mapping function, and save the output to their local disks.

Whenever a mapper process has finished processing a specific reduce key (recall that the output of the map phase consists of key/value pairs), it notifies the master process.

The master process assigns completed keys to various reducer processes.

Each worker process can act as either a mapper or a reducer, as they are not specialized.

When the master process notifies a reducer process of an available reduce key, it also provides the address of the corresponding mapper (and its local disk) from which the data can be retrieved.

A reducer task must wait for all mapper processes to deliver data for a specific key before it can begin processing.

The master process tracks all mapper tasks and verifies whether they have all provided data for a specific key before allowing the reduce task to start processing that key.

MapReduce offers superior fault tolerance compared to traditional database systems, which is one of the paradigm’s key advantages.

Consider a conventional database system such as Teradata.

Despite the various fault tolerance features in a Teradata system, it is difficult to imagine scaling it to hundreds of thousands of processes.

Statistical probability guarantees long-term failure.

Let’s try a straightforward example:

With a 1% probability of failure for each process, the chance of at least one process failing among hundreds of thousands is approximately 100%. Failure is therefore a certainty in such a large system — a scenario that is increasingly common in the era of Big Data.

Map-Reduce implementations prioritize fault tolerance.

The same statistical rules apply to Map-Reduce. Consider a Map-Reduce job generating numerous mapper and reducer processes. Such a job can run for extended periods, processing large volumes of data while performing complex computations such as pattern recognition.

A master process regularly communicates with all mapper and reducer processes and logs the processed key ranges for each task.

If a mapper fails, the master assigns the entire key range to another mapper, which reads from HDFS and reprocesses the same range.

If a reducer fails, the same approach applies and a new reducer is assigned to the task. However, previously generated output keys do not need to be reprocessed.

The master process monitors all activities within the MapReduce job and handles failures gracefully, even in systems with many processes.

Additionally, even without actual failures, the master identifies slow mappers or reducers and reassigns their key ranges to new processes.

The master process is a single point of failure: if it fails, all MapReduce tasks fail.

Statistical probabilities must be considered here as well.

Although the probability of failure for any one of the numerous worker processes is nearly 100%, the likelihood of the specific master process failing is minimal. Consequently, Map-Reduce implementations are fault-tolerant and better suited for large-scale tasks than conventional database implementations.

Related Services

🏗️ Planning a Data Platform Migration?

Architecture-first approach: we design before a single line of code is written. Zero data loss across every migration delivered.

Our Migration Services →